Most AI chat systems built on top of documents have the same silent problem: they find text, but they don't really understand it. We spent time rethinking how Attlas processes and retrieves information, and the difference is significant.

Here's what we changed, and why it matters.

The old way: cutting documents like a machine

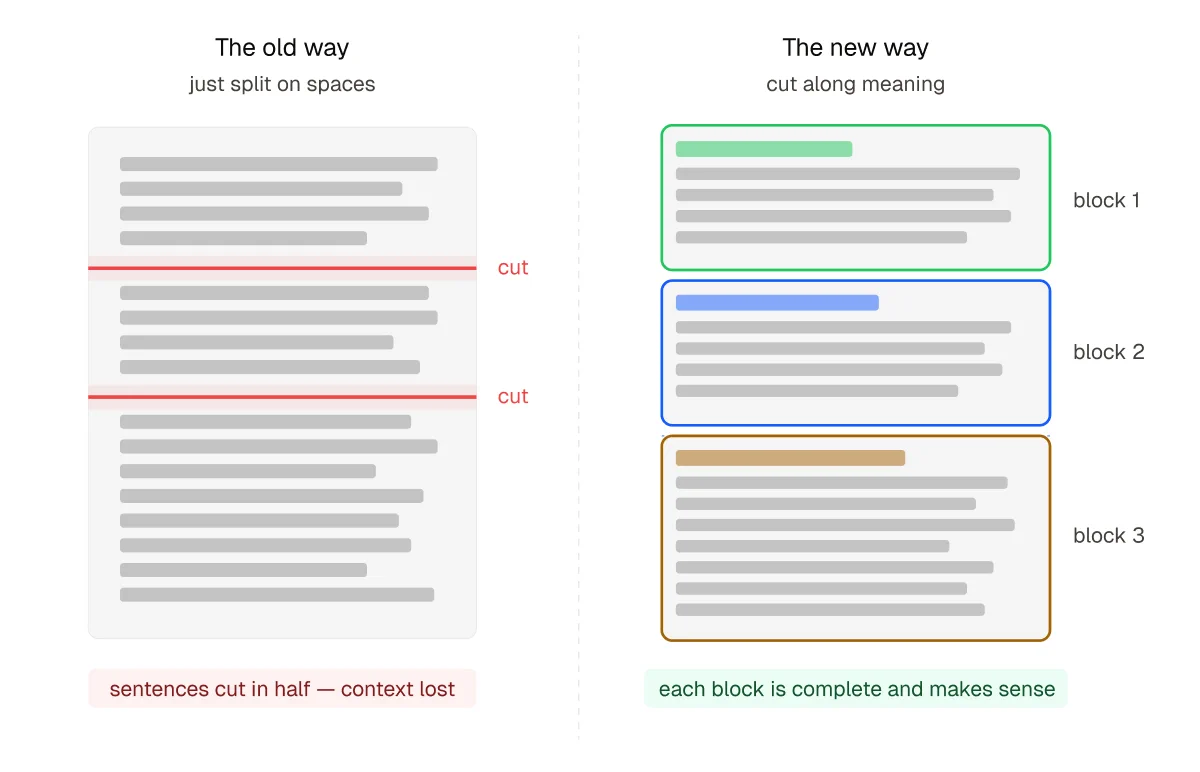

When a user uploads a document, a real estate listing, a legal contract, a training course, the classic approach is simple: cut the text into fixed-size pieces of roughly 1,000 characters, store them, and search through them when someone asks a question.

It works. Until it doesn't.

The problem is that a machine doesn't care about meaning when it cuts. It cuts in the middle of sentences. It separates a question from its answer. It splits a table in two. And when the AI tries to answer based on those broken pieces, the result feels off, incomplete, sometimes wrong.

What we rebuilt

1. Cutting along meaning, not character count

Instead of counting characters, we now cut along the natural structure of the document.

If a document has sections and subsections, we respect them. If it has paragraphs, we group them intelligently. Tables are never split. And we only fall back to mechanical cutting as a last resort, when there's truly no structure to follow.

A short document with no headers? One single chunk. A 20,000-character legal brief with ten sections? Ten meaningful chunks, each complete on its own.

2. Preparing answers to questions nobody has asked yet

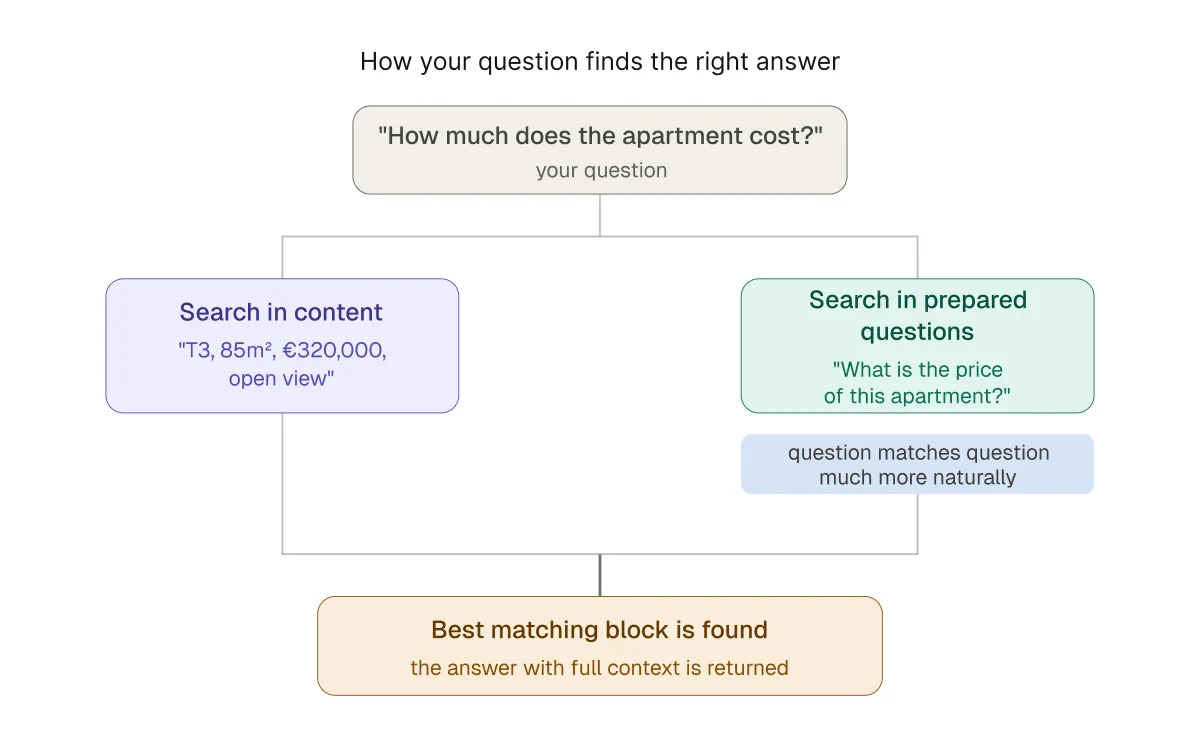

Here's the core insight: a question and its answer don't look alike.

A user asks "how much does the apartment on the third floor cost?" but the document says "T3, 85m², €320,000, open view." These mean the same thing to a human. To a search algorithm, they look very different.

So for every chunk of content we store, we now also ask Gemini to generate 3 to 5 questions that this chunk answers. These questions get stored and indexed alongside the original text.

When a user asks something, the system now searches in two places at once: the original content, and these pre-generated questions. Matching a question to another question is much more natural than matching a question to raw text.

3. Giving the AI the thread of the conversation

Documents don't exist in isolated sentences. Pronouns, references, and context flow from one paragraph to the next.

Consider this: a document says "Marie Curie discovered radium" in one paragraph, and two paragraphs later says "She received the Nobel Prize for this discovery." If the system retrieves only the second paragraph, it has no idea who "she" is or what "this discovery" refers to.

Now, every chunk automatically carries the last two sentences of the previous chunk as silent context. The AI receives:

[Context: Marie Curie discovered radium in 1898.] She received the Nobel Prize for this discovery in 1903.

4. Keeping summaries out of the way

For every document, we generate a dense summary using Gemini's 1-million-token context window, meaning even a 500-page legislation document gets summarized completely, without truncation.

This summary is stored separately and used only for broad, general questions about the document. It no longer contaminates precise search results, which was a subtle but real problem before.

What this looks like in practice

| Situation | Before | After |

| User asks with different wording than the document | Often missed | Found via pre-generated questions |

| Pronoun in retrieved text ("she", "this", "it") | AI guesses or hallucinates | Context provided automatically |

| Short document, simple question | Multiple fragmented chunks | Single clean chunk |

| General question about a long document | Random chunk returned | Summary injected directly |

| Table in a document | Potentially split mid-row | Always kept intact |

The architecture behind it

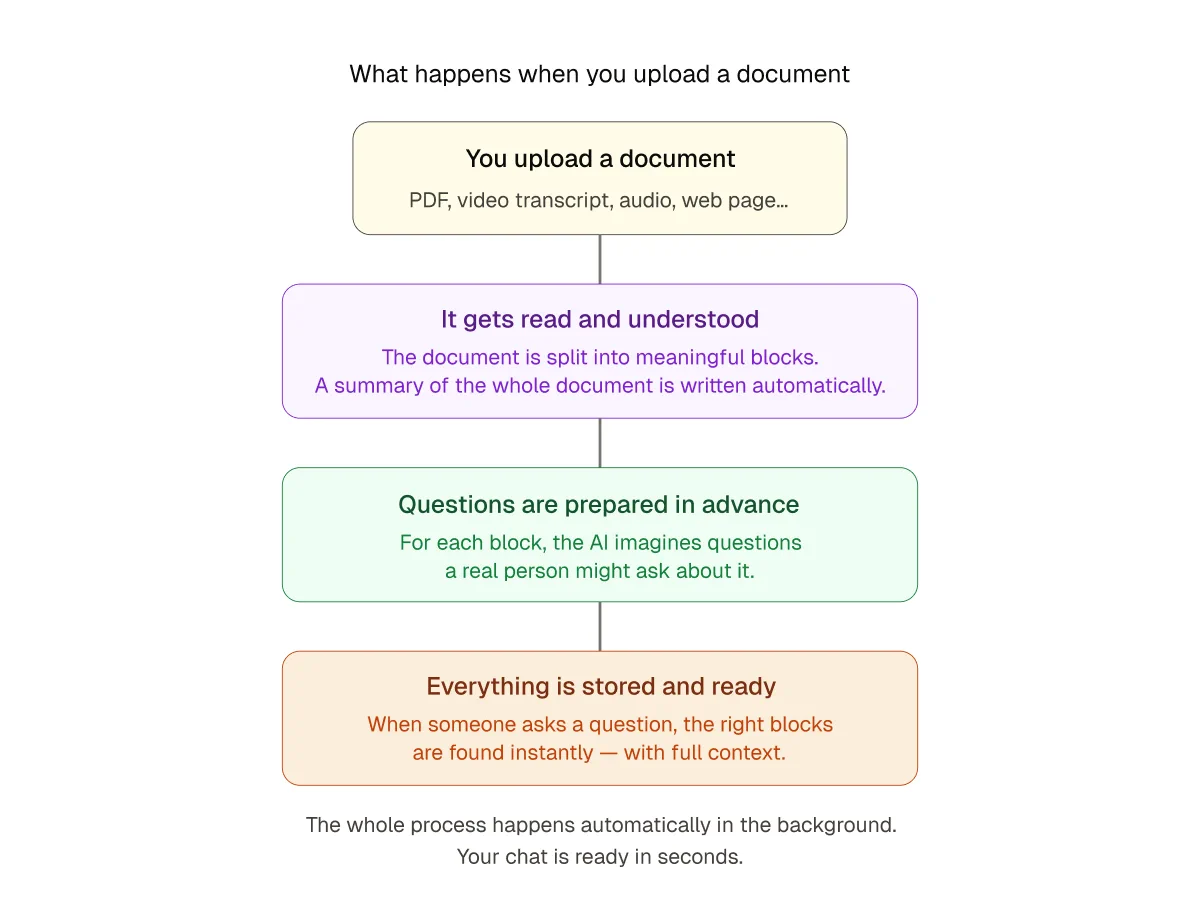

This entire enrichment pipeline runs as a single API endpoint. A document goes in as markdown text. What comes out in the database is a structured, enriched, search-ready set of chunks, with embeddings, pre-generated questions, contextual prefixes, and a document-level summary.

The search function queries both the content and the question in parallel, merges the scores, and reconstructs each chunk with its context before sending it to the AI.

Why this matters for Attlas users

Attlas is used by real estate agencies, law firms, restaurants, professors, coaches, and ministries, etc… all uploading very different kinds of documents in very different languages.

What they all have in common: they need their AI chat to actually know what's in their documents. Not approximately. Not most of the time. Reliably.

That's what this rebuild is about.